Week in Review — February 23–27, 2026

The week underscored how artificial intelligence is reshaping the cybersecurity landscape in both defensive and offensive capacities. From a surge of venture‑capital dollars aimed at AI‑native security firms to law‑enforcement operations that leveraged AI‑generated threat intelligence, the industry is grappling with the same underlying reality: AI is expanding the attack surface while also offering new tools for mitigation. The momentum is clear—investments, prosecutions, and product development are all accelerating around AI‑driven capabilities.

AI’s dual role is most evident in the funding boom described by Dark Reading, the rapid dismantling of cybercrime rings in Africa that relied on AI‑crafted phishing, the launch of AI‑based flaw‑finding assistants that still struggle with speed and accuracy, and the heightened focus on wireless and drone threats that will test new AI‑enhanced detection systems at the upcoming FIFA World Cup. Together, these events illustrate the tight interweaving of AI advancement and cybersecurity practice, a trend that will continue to dominate the conversation.

As Cybersecurity Firms Chase AI, VC Market Skyrockets | Dark Reading

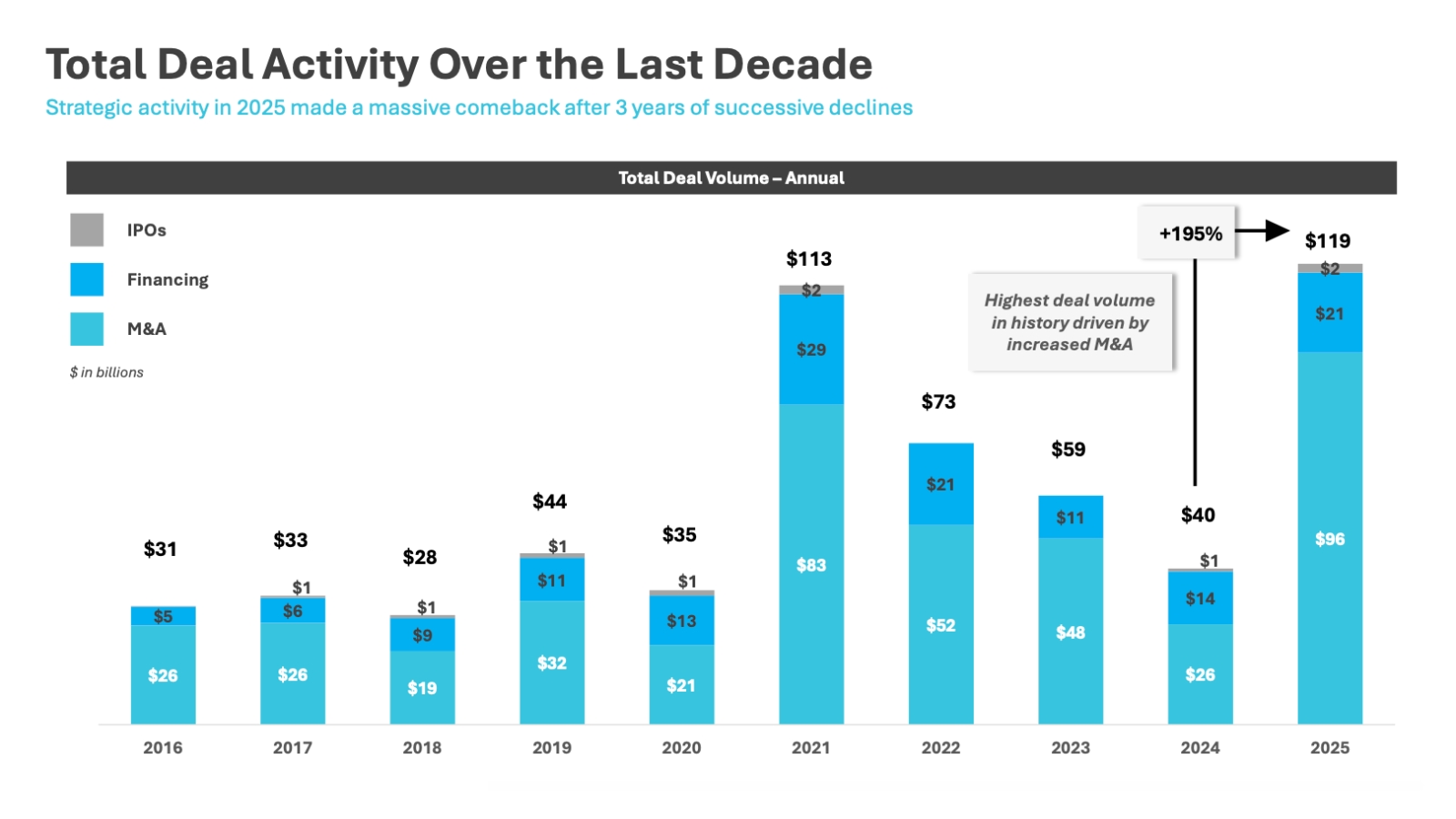

Venture capital poured $119 billion into cybersecurity companies in 2025, with 400 mergers and acquisitions and 820 financing deals totaling nearly $21 billion. AI‑native security startups closed the most deals (144) and risk‑and‑compliance firms followed closely (137). The market trajectory continued into 2026, with January recording 38 M&A deals, putting the annual forecast at 477 transactions. The focus on AI‑native solutions reflects both the expansion of AI agent use and the need to protect the resulting attack surface.

Operation Red Card 2.0 Leads to 651 Arrests in Africa | Dark Reading

Interpol and law‑enforcement teams from 16 African countries coordinated in Operation Red Card 2.0, resulting in 651 arrests and the recovery of more than $4.3 million. The operation targeted investment fraud rings in Nigeria and Kenya, a mobile‑loan‑fraud scheme in Côte d’Ivoire, and a telecom‑provider breach in Nigeria. Partners such as Trend Micro and Team Cymru supplied threat‑intelligence analysis, identifying infrastructure used for crypto‑scams that siphoned over $45 million from victims.

Flaw‑Finding AI Assistants Face Criticism for Speed, Accuracy | Dark Reading

Anthropic’s Claude Code Security, powered by Claude Opus 4.6, discovered more than 500 zero‑day vulnerabilities in open‑source projects but was criticized for slowness and high false‑positive rates. In tests, the tool took 15–17 minutes to analyze a code sample, uncovering two false positives out of three issues. Comparisons with existing static‑analysis tools showed it was considerably slower and more costly, leading experts to view the current iteration as a preview rather than a production‑ready solution.

Cities Hosting Major Events Need More Focus on Wireless, Drone Defense | Dark Reading

The 2026 FIFA World Cup will require host cities to manage a complex radio‑frequency environment, including hundreds of thousands of wireless devices and autonomous drones. Experts warn that without visibility, operators cannot defend operational technology such as stadium systems or public‑safety communications. Active threats include jamming or hijacking command‑and‑control signals, while drones may use onboard AI to bypass traditional counter‑drone measures. The event highlights the growing need for AI‑enhanced monitoring and rapid response to wireless and aerial attacks.

(Created with Ollama and GPT-OSS)